Anthropic Launches Project Glasswing to Use AI for Defensive Cybersecurity

Anthropic announced Project Glasswing on April 8, 2026, giving select partners including Amazon, Apple, Google, Microsoft, and Nvidia early access to its unreleased Claude Mythos Preview model for defensive cybersecurity work. The frontier AI system demonstrated exceptional performance on coding benchmarks and uncovered thousands of previously unknown vulnerabilities in critical software and hardware systems. Access remains tightly restricted to defensive cybersecurity work only, with findings to be shared industry-wide.

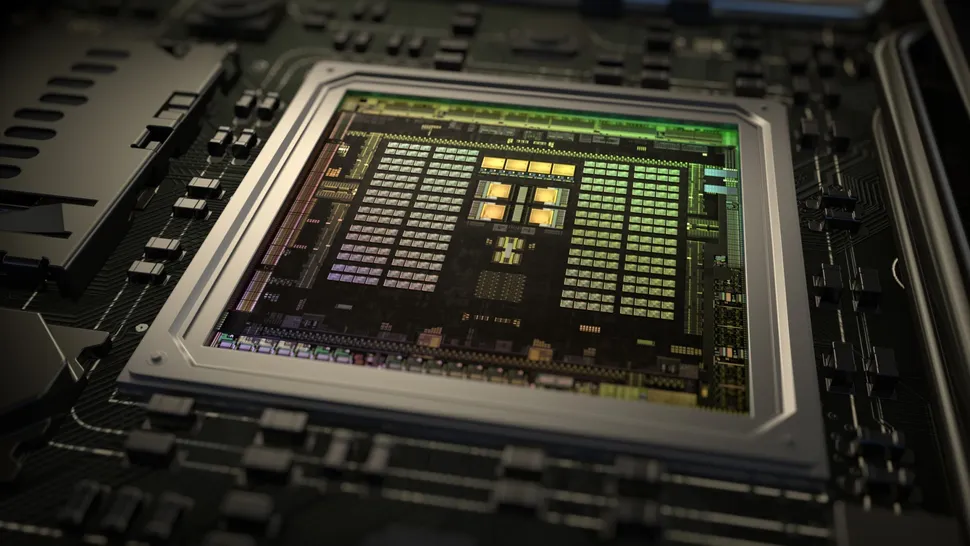

Anthropic announced Project Glasswing on April 8, 2026, a landmark initiative that gives select partners early access to its unreleased Claude Mythos Preview model for defensive cybersecurity work. Partners include major technology companies such as Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, Microsoft, Nvidia, and Palo Alto Networks. The frontier AI system demonstrated exceptional performance on coding benchmarks and uncovered thousands of previously unknown vulnerabilities in critical software and hardware systems during testing. Access to the model remains tightly restricted to defensive cybersecurity work only, with findings to be shared industry-wide to strengthen collective defenses. Anthropic says the model is powerful enough to uncover serious software flaws across major operating systems and browsers. The company is steering the model toward defensive cybersecurity rather than a broad public release, recognizing that AI labs are no longer just racing to build stronger models, they are racing to prevent those models from becoming offensive cyber tools. Project Glasswing marks the first large-scale, collaborative effort by Big Tech to weaponize frontier AI for defensive cybersecurity at enterprise scale, potentially reshaping how the industry addresses zero-day threats. The announcement comes amid heightened cybersecurity concerns, with Iran-affiliated actors exploiting internet-exposed programmable logic controllers in U.S. Critical infrastructure, and North Korea-linked actors deploying over 1,700 malicious packages impersonating developer tools across multiple software repositories. The U.S. National Institute of Standards and Technology (NIST) is also launching initiatives to define security standards for AI agents, recognizing the new attack surface they introduce.